Marketing tips, news and more

Explore expert-backed articles on SEO, data, AI, and performance marketing. From strategic trends to hands-on tips, our blog delivers everything you need to grow smarter.

Profitable Organic Content Production with N-Gram Analysis

How to Generate Profitable Organic Content with N-Gram Analysis?In the e-commerce projects we consult on for SEO, we have all certainly encountered the expectation: “We don’t want to create blog content only to gain organic traffic; our goal is also to generate revenue from the blog content we produce.” After performing the analyses below, tracking user behavior and observing the results of the planned strategy will be the most critical part, but I hope this strategy sparks an idea in your mind. :)1. Setting Up N-Gram AnalysisFor this strategy, the pool of search queries resulting in sales on the website is first subjected to N-Gram analysis. This analysis breaks down search queries into 1, 2, and 3-component parts, evaluating each component separately.By ranking according to various metrics such as ROAS, Conversion, and CPO, the top-performing 1, 2, 3, or 4-gram terms are obtained. This allows you to see which terms perform best and have the highest spend.This analysis provides insights for targeting high-performing terms more heavily and removing terms with high spend but low contribution to performance from the strategy.2. List of High-Performing Search Terms via N-Gram AnalysisIn Google Ads Search Network campaigns, we identify main categories for organic content revenue targeting through high-performing generic and short-tail keywords. (For example: men, women, children, men’s shoes, children’s t-shirts, women’s blouses, etc.)Encountering the main keywords identified as high-performing in the N-Gram analysis also in 3 and 4-gram versions provides a sort of validation of the strategy.Although our SEO efforts target long-tail keywords corresponding to 3 and 4 grams, analyzing 1 and 2-gram main keywords is essential for identifying high-performing umbrella keywords that may drive results.TIP: Branded queries still have high sales potential compared to non-branded queries, so extra attention should be paid to umbrella keywords within branded queries, as these keywords are already familiar to your users and have higher purchase potential.3. Organic Performance Analysis of High-Performing KeywordsThe organic traffic performance for the website is analyzed for the 3 and 4-gram keywords identified as high-performing in Google Ads Search Network campaigns. Thus, in the organic content strategy built around keywords performing well in Google Ads, the organic performance transfer for queries aligned with SEO targeting can also be evaluated.4. Organic Content CreationFinally, blog content with Informational & Transactional intent is created for the keywords identified through Google Ads and organic performance analyses, with a focus on sales potential.1. For profitable queries ranking in the top 3 organically, a blog strategy aligned with Transactional intent can be developed, as it is possible to gain authority through blog content.2. For queries with average organic traffic performance, ranking 4-10, a blog strategy aligned with Transactional intent can also be developed.In summary, although targeting long-tail queries is still the primary SEO strategy, you can design your blog content strategy for profitability by identifying revenue-generating keywords from N-Gram analysis with generic and short-tail queries and leveraging existing organic performance for those queries.Even if N-Gram analysis is just one method for profitability analysis, it is an essential step for constructing the correct strategy.Content sharing alone is not enough in the user’s purchase journey; you can encourage users toward conversion by using effective internal linking strategies within blog content.

Preparing for Privacy Sandbox: What is Storage Access API?

Chrome is gradually phasing out support for third-party cookies to reduce cross-site tracking. This creates a challenge for sites and services that rely on cookies in embedded contexts for user journeys like authentication. The Storage Access API (SAA) allows these use cases to continue while limiting cross-site tracking as much as possible.What is the Storage Access API?The Storage Access API is a JavaScript API for iframes to request access to storage permissions that would otherwise be denied by browser settings. Embedded elements with use cases dependent on loading cross-site resources can use this API to request access from the user when needed.If the storage request is granted, the iframe will be able to access cross-site cookies, just like it would if the user visited that site as a top-level context.While it prevents general cross-site cookie access often used for user tracking, it allows specific access with minimal burden on the user.Use casesSome third-party embedded elements require access to cross-site cookies to provide a better user experience — something that will no longer be possible after third-party cookies are disabled.Use cases include: Embedded comment widgets that require login session details. Social media “Like” buttons that require login session details. Embedded documents that require login session details. A top-level experience delivered within an embedded video player (e.g., not showing ads to logged-in users, knowing user caption preferences, or restricting certain video types). Embedded payment systems. Many of these use cases involve maintaining login access within embedded iframes.Using the hasStorageAccess() methodWhen a site first loads, the hasStorageAccess() method can be used to check whether access to third-party cookies has already been granted.// Set a hasAccess boolean variable which defaults to false. let hasAccess = false; async function handleCookieAccessInit() { if (!document.hasStorageAccess) { // Storage Access API is not supported so best we can do is // hope it's an older browser that doesn't block 3P cookies. hasAccess = true; } else { // Check whether access has been granted via the Storage Access API. // Note on page load this will always be false initially so we could be // skipped in this example, but including for completeness for when this // is not so obvious. hasAccess = await document.hasStorageAccess(); if (!hasAccess) { // Handle the lack of access (covered later) } } if (hasAccess) { // Use the cookies. } } handleCookieAccessInit();

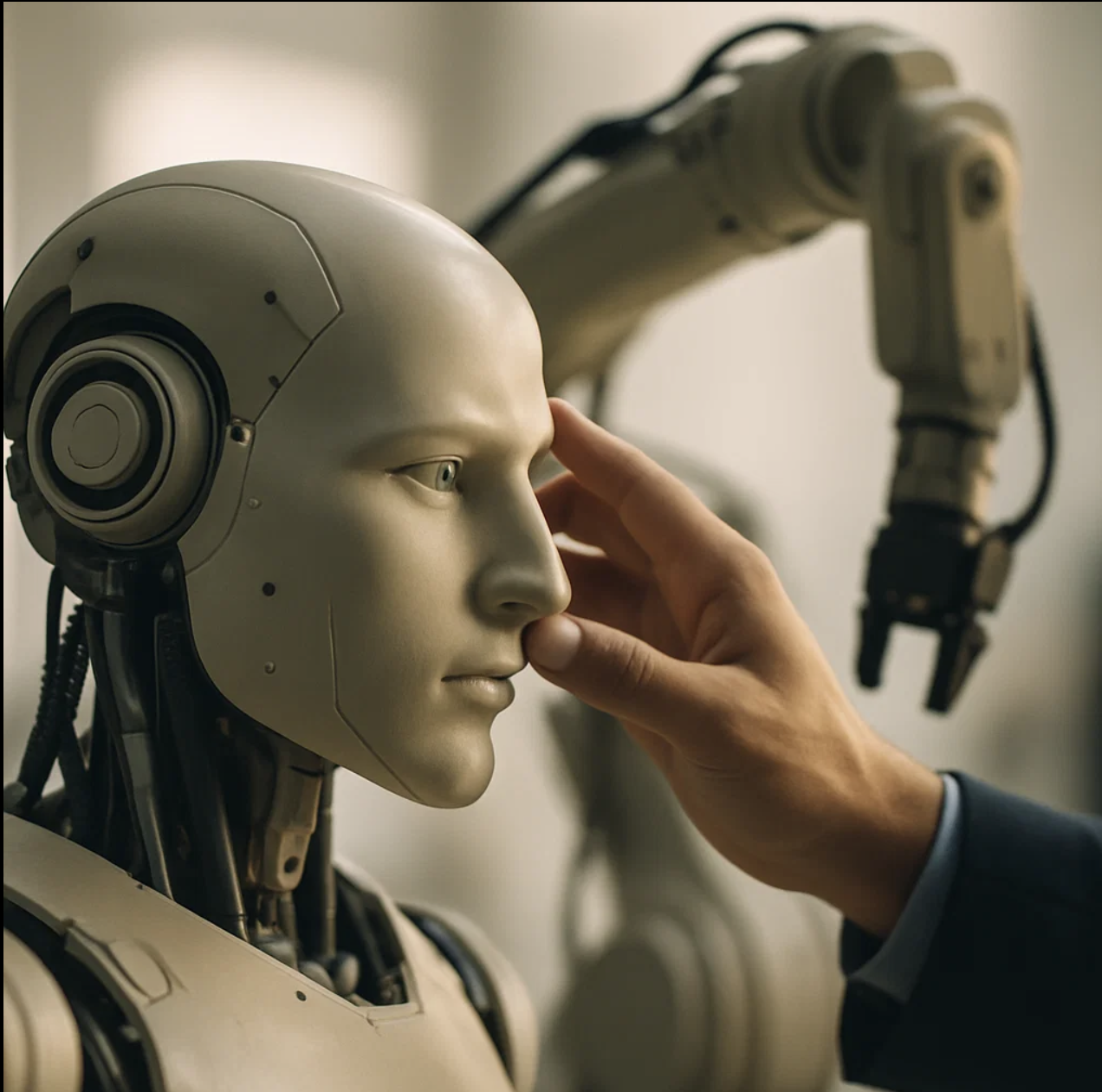

The Power of AI and Automation at AnalyticaHouse

We are delighted to announce that AnalyticaHouse has been selected as a finalist for the coveted “Best Use of AI in Search” award at the European Search Awards 2024!The Fusion of AI and Automation at AnalyticaHouseAutomation plays a crucial role in enhancing our efficiency at AnalyticaHouse. By automating controllable and standardized operations, we can redirect our efforts toward more creative and strategic thinking. The systems we’ve established reduce our daily workload, allowing us to engage more deeply with the core concepts that define marketing.We have begun integrating AI into our automation processes, particularly leveraging developments from OpenAI and the user-friendly nature of its API. This integration brings the creative power and processing capacity of AI into the workflows at AnalyticaHouse.Today, we’ll discuss one of our projects in this realm—the system we developed for SOVOS Turkey, which has made it a finalist at the EU Search Awards 2024.Leveraging AI to Overcome Marketing Challenges in a Digital AgeIn today’s world, the attention spans and engagement thresholds of users are diminishing day by day. Accelerating technology and intensifying competition make capturing a user’s interest at the first point of contact increasingly challenging. Coupled with the constantly evolving user psychology and desires, crafting marketing communications that adapt to changing consumer behaviors presents a significant challenge.At this juncture, we have merged traditional technologies with the power of AI to create a system that understands user needs and psychological states in real time. This system enables us to identify evolving user personas and generate personalized advertising communications that dynamically adapt to these changes.Revolutionizing Ad Strategies with AI and Real-Time DataAt this stage, we created a Google Sheets document to organize all data and feed Google Ads with the generated ad copy.Using Ads scripts, we identified other search terms that the user group interacted with. Furthermore, the script provided age and gender distribution data for this user group, helping us understand the predominant demographics.Through Apps Script, we fetched location and interest data for the relevant user group from GA4 and organized these into tables for persona creation.Additionally, using the SERP API, we retrieved the most frequently searched topics and terms by this user group from Google Trends and added these to our persona table.We also fed this structured data into the OpenAI API and GPT-4 Turbo script using Apps Script. Thanks to predefined business criteria and relevant signals, GPT-4 Turbo was able to generate a detailed persona.After the Persona Creator script outputted the persona, it was re-inputted into GPT-4 Turbo along with the target main keyword, requesting five unique keywords for this persona. The brief given to GPT-4 included dopamine-triggering communication and neuromarketing techniques suitable for B2B marketing, thus enhancing the impact of the generated ad copy.The ad texts produced were then applied to relevant ads through Google Ads Customizers and updated weekly to ensure the data reflected current user behavior and demographics, allowing for dynamically tailored ad copy.Comparatively, against a static generic ad, the dynamic ad demonstrated a 27% increase in CTR, a 20% reduction in CPC, and a 40% rise in conversions over the testing period.Evolving with AI: Shaping the Future of Digital MarketingThe world is changing at an increasingly rapid pace, and this acceleration is set to continue. The ease of use of artificial intelligence, as in various industries, has led to rapid changes in the world of digital marketing as well. The enhancement and optimization of traditional technological approaches, when combined with AI and reinforced with validated knowledge, offer incredible potential to keep pace with this changing world and match its speed, perhaps even helping to create the new.“In today’s world, executing user-centric marketing operations and being able to optimize them instantaneously based on changing signals carries great significance for achieving both strong performance and cost-effective marketing spend.Our approach in this project not only highlights the contributions and value of personalized marketing operations but also demonstrates how we can leverage the most advanced technological solutions of the new world in familiar and simple ways. This endeavor may inspire brand-new projects and ideas, and it sets a new benchmark for personalized marketing and the use of AI in the digital marketing realm.At this transformative moment, we, the AnalyticaHouse team, are both inspired and emboldened by the recognition and appreciation this work has received from a prestigious and respected organization like the European Search Awards. It has been a source of great pride for us and has fueled our motivation on this journey towards the next: to the future.”Project Owner | Emrecan Karakus - Performance Marketing Manager @AnalyticaHouseBeing selected as a finalist at the European Search Awards is a profound validation of the strategies we’ve deployed and the innovations we’ve cultivated along this path. As an organization, we take great pride in the achievements of our team and the milestones we’ve reached together.This project is a snapshot of our overarching methodology, showcasing the exceptional service we deliver to our clients and customers. Our diverse team spans Performance Marketing, Data Science, Product Analytics, SEO, Media & Planning, and Marketing Communications forming a cohesive unit dedicated to overseeing and enhancing every aspect of our clients’ marketing strategies. With this integrated approach, we not only help our clients meet their goals across various industries but also drive them toward pioneering outcomes by implementing operational and strategic initiatives that lead the market and foster innovative solutions.

Write e-commerce Purchases to Firestore with sGTM

Server-side Google Tag Manager (sGTM) offers enhanced flexibility and security for tracking and handling data in your e-commerce applications. One powerful application of sGTM is writing purchase data directly to Firestore, Google Cloud's NoSQL database. This blog post will walk you through the process of setting up sGTM to capture e-commerce purchases and store them in Firestore.Step 1: Creating a New Server-side Google Tag Manager (sGTM) TemplateIn this step, you'll create a custom template in your server-side Google Tag Manager (sGTM) container. This template will define the logic for capturing e-commerce purchase events and sending the data to Firestore. By creating a reusable template, you streamline the process of handling and managing purchase data across your e-commerce platform.You can use this code to accessing write and read data to Firestore from sGTM.const Firestore = require('Firestore');const Object = require("Object");const getTimestampMillis = require("getTimestampMillis");let writeData = { timestamp: getTimestampMillis()};if (data.customData && data.customData.length) { for (let i = 0; i < data.customData.length; i += 1) { const elem = data.customData[i]; if (elem.fieldValue) { writeData[elem.fieldName] = elem.fieldValue; } else { Object.delete(writeData, elem.fieldName); } }}const rows = writeData;Firestore.write('', rows, { projectId: '', merge: true,}).then((id) => { data.gtmOnSuccess();}, data.gtmOnFailure);Step 2: Configuring the Firestore DatabaseOpen Google Cloud Console: Navigate to the Firestore section. Create Database: Follow the prompts to set up a Firestore database in "production mode" or "test mode" based on your requirements.You may also create a new rule like this while you are running test mode: rules_version = '2';service cloud.firestore { match /databases/{database}/documents { match /{document=**} { allow read, write: if request.auth != null; } }}Now you can send the any purchase data to Firestore like this: Contact us for more use cases using server-side Google Tag Manager.

Preparing for Privacy Sandbox: What is CHIPS?

With Google's postponement of the Cookie phase-out process to the first quarter of 2025, many brands and digital marketers must take full advantage of the Privacy Sandbox and the transition process.That’s why in the first post of our new blog series, we’ll briefly introduce CHIPS within the scope of the Privacy Sandbox.CHIPS, or Cookies Having Independent Partitioned State, gives developers the ability to include cookies in partitioned storage, enhancing user privacy and security through separate cookie jars for each top-level site.Without partitioning, third-party cookies allow services to track users and combine their information across unrelated top-level sites — a practice known as cross-site tracking.Browsers are moving toward phasing out unpartitioned third-party cookies. When third-party cookies are blocked, CHIPS, Storage Access API, and Related Website Sets will be the only options for reading and writing cookies in cross-site contexts like iframes. (We’ll cover those later.)Partitioning is currently supported only in Chrome and Edge browsers. It’s not yet available in Firefox or Safari.For example, imagine you have a website named retail.example and you want to integrate a widget hosted on support.chat.example, a third-party service, to support your users via chatbot.Many embedded chat services already rely on cookies to track user actions.Without cross-site tracking adjustments, support.chat.example typically has to find more complex alternatives to store those cookies. Another option might involve embedding it on retail.example with higher privileges—such as access to authentication cookies—which comes with security and legal risks due to potential exposure of PII (Personally Identifiable Information).This is where CHIPS comes in, offering an easier way to continue using cross-site cookies without the risks associated with unpartitioned cookies.So, Where is CHIPS Used?CHIPS generally applies to any cross-site scenario where subresources require a session or persistent state specific to the user’s interaction within a single top-level site. Common use cases include: Embedded third-party chat widgets Embedded third-party map widgets Embedded third-party payment method widgets Subresource CDN load balancers Headless CMS providers Third-party CDNs using cookies to serve gated content depending on authentication status on the first-party site (e.g., social media profile images hosted on third-party CDNs) Remote API endpoints that depend on cookies Embedded ads that need publisher-specific state (e.g., capturing ad preferences per website) More info:https://developers.google.com/privacy-sandbox/3pcd/chips If you’d like to learn more about cookieless measurement beyond Privacy Sandbox, take a look at our AnalyticaHouse Cookieless project.

Best Wordpress SEO Plugins

WordPress is one of the most widely used infrastructures for websites today. Its ease of use and drag-and-drop logic, which allows you to create desired designs in a short time, play a major role in its popularity. Naturally, with so many users, developers and programmers have increased the number of free plugins available in this area. Many plugins that would normally require a fee on other platforms are just a few clicks away on the WordPress dashboard and completely free.One of the biggest reasons for WordPress’s popularity is the abundance of free plugins. Users who know they won’t encounter additional charges can choose the WordPress panel to try out different improvements and analyses on their websites.As the Analytica House team, we have compiled the best SEO plugins for websites built on WordPress infrastructure for you.1- Yoast SEOYoast SEO is perhaps one of the most used plugins in WordPress. This powerful tool supports your SEO strategy at every level with its user-friendly interface and rich feature set; it handles everything from titles to meta descriptions, keyword analysis to readability checks with finesse. Yoast SEO helps you easily perform the necessary technical optimizations to make your website more visible on search engines like Google, so your content reaches your target audience and maximizes your organic traffic potential. In addition, Yoast SEO’s regular updates and educational materials keep you informed about the latest SEO trends and algorithm changes, allowing you to continuously improve your website’s search engine performance.Pros You can perform multiple SEO operations at once, such as keyword optimization, readability, title & description editing, pillar content, free FAQ structure, and free parameter creation. It is very easy to use and can be learned quickly with some exploration. Supports mobile compatibility of your website. Offers a preview for Google SERP results, making optimization easier. Provides automatic sitemap generation. Allows quick and easy access to robots.txt and htaccess files. Cons Not all features are available in the free version. The Pro version is paid. May cause compatibility issues with some WordPress themes. 2- WP-RocketDo you have a WordPress-based website and experiencing speed issues? Then WP Rocket is just the plugin you need. With features like compression and caching, it positively impacts your site’s speed in a very short time.With its user-friendly interface and automatic settings, it provides instant speed improvements without requiring technical knowledge. WP-Rocket dramatically shortens page load times, which improves user experience and positively affects your search engine ranking.Pros Very easy to install. It can be easily installed and activated from the Plugins section in the WordPress navigation area. Allows you to perform page caching, GZIP compression, and deferment of JavaScript and CSS files without any technical knowledge. You can set up Lazy Load for free, which contributes to your site’s speed. Cons CDN and similar features are only available in the premium package. They are not available in the free version. May have compatibility issues with some themes. Even in the premium package, support can sometimes be slow. 3- Broken Link CheckerFor the SEO health and visibility of your website, technical details are just as important as content. One of the most common issues in internal site health is broken links and missing images. However, scanning can be difficult if your website is large or complex. Broken Link Checker is a plugin that scans and reports broken links in such cases.Pros Helps detect broken links and images on your website. Quick and easy to install. Allows you to manage redirects very simply. Cons The plugin may give false positives due to temporary or faulty links, which can mislead users. Might not detect specific JavaScript-related issues. On large or frequently updated sites, constant scanning may overload the server. 4- All in One SEOLike Yoast SEO, this tool allows you to perform comprehensive SEO configurations. It is one of the most used tools after Yoast SEO and Rank Math. Its setup and functions are quite similar to Yoast.Its easy-to-use interface appeals to both SEO beginners and experts, offering comprehensive tools to increase a website’s visibility on search engines. AIOSEO allows you to easily handle core SEO tasks such as managing titles, meta descriptions, keyword optimization, social media integration, XML sitemaps, and robots.txt files.Additionally, it provides concrete feedback on how to optimize your content for search engines through features like content analysis and SEO scoring. The AIOSEO plugin helps users improve their website’s SEO performance without dealing with technical details, thanks to its ability to apply SEO settings automatically.Pros Offers extra readability reports and SEO scores. Provides quick access to files like XML sitemaps and robots.txt. Allows you to quickly perform basic SEO operations (Title, description, etc.). Cons Its SEO metrics are not 100% aligned with Google’s latest updates (e.g., keyword count). Lacks variety in structured data markup options. 5- Rank MathRank Math, similar to Yoast and All in One SEO tools, provides a major advantage in performing basic SEO operations easily. It’s arguably the second most downloaded SEO plugin after Yoast SEO in terms of user experience. Especially for structured data markup, Rank Math allows you to perform more detailed and easier configurations, making it ideal for helping search engine bots better understand your pages.This plugin, which is very easy to use and lets you clearly see your SEO score, makes your optimizations even simpler.Pros The most preferred plugin after Yoast SEO. Easy and simple to install. Does not slow down your site. Very efficient for structured data markup. Cons May be a bit complex. The panel may be challenging at first. May conflict with other plugins. The Pro version offers a more comprehensive service. Lucky WP - Table of Content PluginTable of content is one of the most important elements for semantically structured content. Indicating your content with a table of contents using correct heading rules allows both search engine bots and users to better understand and navigate your content. If you're running a website on WordPress, you don’t need to write code or perform complex operations to add a table of contents to your blog or other content pages.Lucky WP (ToC) plugin is a plugin with a very simple setup that integrates with your pages and automatically detects heading tags to create a table of contents effortlessly.Pros Automatically detects your heading tags and helps you add a table of contents without effort. Loads quickly and is easy to use. Improves user experience within the site and is also an SEO-friendly WordPress plugin. Cons You may face style issues. It might be incompatible with your theme. May conflict with other plugins. 7- WP CodeThis plugin creates accessible head and body sections when you want to add third-party codes or applications to your website, allowing you to insert 3rd party installations without complexity.For example, if you want to add a third-party application like Google Tag Manager, which is added to almost every website, GTM provides head and body codes. Instead of looking for these code sections in the theme editor separately, you can add the GTM code to the WP Code plugin. This way, you can develop your site faster and more easily without harming other codes in the header.php section.Pros Provides great convenience for those who don’t know how to code. Allows you to easily add third-party applications to your site. Helps you avoid deleting/modifying essential head codes for the site. Cons Inserted codes may slow down the site. There is no safeguard for this. Offers no extra functionality. It only supports inserting such codes. 8- 301 Redirects301 redirect operations can sometimes be difficult on WordPress sites. You can easily perform this necessary task to preserve the existing page authority using 301 redirects. The very simple 301 Redirect Plugin allows you to quickly redirect an A link to a B link on your site with a 301 HTTP Status code.Pros Helps you easily configure your 301 redirects. No need for theme or other compatibility. Quick to install and easy to use. 301 redirects work almost 100% correctly. Cons Only allows 301 redirects. You can’t perform other necessary redirects like 302. Incorrect configurations can lead to Redirect Chains (broken link chains).