Analytica House

Eyl 3, 2022Otomatik İşlerimiz İçin Yeni Bir Araç Uyarlıyoruz: Apache Airflow

Performans pazarlaması, reklamcılık ve yeniliği birleştirerek perakendecilerin ve bağlı kuruluşların işlerini her açıdan büyütmelerine yardımcı olur. Her perakendecinin kampanyası dikkatle hedeflenir, böylece herkesin başarı ve kazanç şansı olur. Tüm taraflardaki işlemler doğru yapıldığında, performans pazarlaması hem perakendeciler hem de bağlı kuruluşlar için kazançlı sonuçlar sunar.

Biz, Tech Team olarak, yazılım mühendisliği becerilerimizle oluşturduğumuz dijital pazarlama projeleri hakkında yeni bloglar yazma kararı aldık. Farklı markalar için yeni çözümler üretiyor ve ana hedefimiz, veri analizi ve otomasyon projeleriyle performanslarını artırmak. Bu doğrultuda, projelerimizle ilgili yeni bloglar yayımlamaya karar verdik.

Takım olarak neler üretiyoruz?

Tech Team olarak modern performans pazarlaması anlayışını benimsedik ve bunun bir sonucu olarak müşterilerimiz için ürün raporlarını zamanlı olarak oluşturacak bir Airflow otomasyon projesi geliştirdik. Ürün raporlarımızda, benzersiz kodlar, stok durumu, indirimli fiyatlar, indirim yüzdeleri ve URL’leri ziyaret ederek elde edilen diğer özellikler gibi ürün bilgilerini topluyoruz. Ayrıca, otomasyon projelerimize izleme ve raporlama gücü katmak için Google Sheets entegrasyonu sağladık. Dağıtım aşamasındaki olası sorunlara karşı Airflow ortamını sanal olarak izole eden Docker konteyner teknolojisini de kullandık.

Özetle, Airflow; farklı amaçlar için veri iş akışları (pipeline) oluşturmayı sağlayan bir otomasyon aracıdır. Cron işlerine kıyasla Airflow’un sunduğu kullanıcı arayüzü sayesinde süreçlerimizi neredeyse gerçek zamanlı izleyebiliyoruz. Platformdaki log analizi ise hataları yakalamamıza ve düzeltmemize büyük katkı sağlıyor. Ayrıca, hata oluştuğunda yapılandırma dosyası aracılığıyla e-posta bildirimleri alabiliyor, anında müdahale edebiliyoruz.

Temel akışımız veri kazıma (scraping) üzerine kurulu ve ölçeklendirilebilirliği sağlamak için iş parçacıklarını (thread) paralel ve eşzamanlı çalıştırıyoruz; böylece binlerce URL’den veriyi dakikalar içinde toplayabiliyoruz. Google Cloud üzerinde birden çok sanal makine şablonu oluşturduk ve görevleri sırayla çalıştıracak şekilde yapılandırdık. En kritik kısım, veriyi rapor süreçlerine tek bir şablon üzerinden iletmek.

Airflow Nedir?

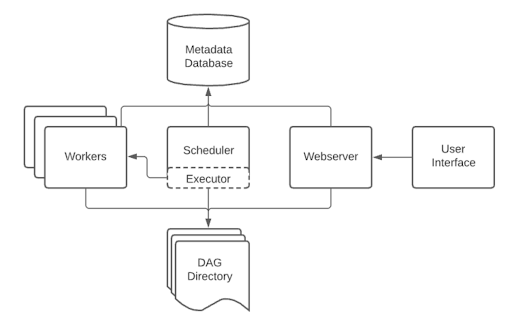

Airflow’un yapısını daha iyi anlamak için “Bir DAG (Directed Acyclic Graph), Görevler (Task) arasındaki bağımlılıkları ve bunların hangi sırayla yürütülüp yeniden denenmesi gerektiğini tanımlar; Görevler ise veri çekme, analiz çalıştırma veya diğer sistemleri tetikleme gibi işlemleri yapar”[1]. Aslında Airflow’u, cron işlerinin çok daha yetenekli bir versiyonu olarak düşünebiliriz. Airflow’da işleri gerçekleştiren birimlere “worker” denir.

Airflow’da her şey, “DAG” adı verilen yapı içerisinde tanımlanır. Örnek:

with DAG( "SirketX_Urun_Raporu", schedule_interval='@daily', catchup=False, default_args=default_arguments ) as dag:

1- Context Manager ile DAG tanımlayabiliriz. DAG, zaman çizelgesi, adlandırma ve hata durumunda yeniden deneme ayarları gibi pek çok özellik barındırır. Örneğin:

default_arguments = {

'owner': 'AnalyticaHouse',

'start_date': days_ago(1),

'sla': timedelta(hours=1),

'email': ['analyticahouse@analyticahouse.com'],

'email_on_failure': True,

}

2- Süreci oluşturan görevleri fonksiyonlarla ayırıyoruz. Örneğin:

url_task >> scrape_task >> write_to_sheet_task >> find_path_task >> parse_message_task >> write_message_task

3- Her bir görevi, ilgili fonksiyonu çalıştıran PythonOperator ile tanımlıyoruz. Örneğin:

url_task = PythonOperator( task_id='get_url_data', python_callable=get_url_data, )

Bu operatörler, görevleri sırasıyla istif mantığıyla (stack) çalıştırır. Bir görev hata verirse (exception oluşursa), tüm akış durur ve Airflow bizi bilgilendirir.

Airflow Mimarisi ile Docker’ı Birleştirmek

Docker, uygulamaları oluşturmak, dağıtmak ve çalıştırmak için kullanılan açık kaynaklı bir platformdur. Airflow geliştirme ortamını izole etmek ve dağıtım aşamasındaki sürüm uyuşmazlıklarını önlemek için Apache’ın resmi Docker imajını temel alarak kendi konteyner imajımızı oluşturduk[3]. Ağ yapılandırması ve port yönlendirmelerini kolayca yapabildik. Docker sayesinde geliştirme sırasında karşılaştığımız paket uyumsuzluklarını ve versiyon problemlerini konteyneri yeniden oluşturup dağıtarak hızla aşıyoruz.

Avantajlar:

- Geliştirme aşamasındaki beklenmedik hatalarda zaman tasarrufu

- Ağ ve veri depolama yapılandırmalarının kolayca yönetilmesi

- Hataların izole ortamda yakalanıp çözümlenmesi

- Benzersiz konteyner yapısının her dağıtımda tutarlı olması

Geliştirme Sırasında Karşılaşılan Zorluklar

Projeye başlamadan önce çok sayıda beyin fırtınası yaparak kritik noktaları belirledik. İlk tasarımlarımıza ek özellikler ekledikçe yapı karmaşıklaştı. Başlangıçta Google Cloud VM üzerinde çalıştırdık; ancak birden fazla projemiz olduğunda yönetim ve dağıtım zorlaştı. Bu nedenle Docker’a geçmeye karar verdik. Ayrıca, Airflow veya sunucu hatalarında konteyneri silip yeniden dağıtarak gelişim hızını koruduk. Otomasyon projemizde hata yakalama modülleri eklemek önemliydi; çünkü müşterilerimiz günlük ürün raporu almak istiyor ve herhangi bir hata, teslimin gecikmesine yol açıyordu.

Referanslar

- [1],[2] – https://airflow.apache.org/docs/apache-airflow/stable/concepts/overview.html

- [3] – https://hub.docker.com/r/apache/airflow

More resources

Anomaly Detection ile Performans Düşüşlerini Erken Yakalamak

Dijital ürünlerde en kritik problemler genellikle en geç fark edilen problemlerdir. Trafik düşer, dö...

Indexlenmeyen Sayfalar Nasıl Tespit Edilir?

Web sitesi yöneten veya SEO süreçlerini takip eden biriyseniz, dijital dünyadaki varlığınızın en te...

404, 410 ve 401 Durum Kodları SEO’yu Nasıl Etkiler?

Dijital dünyada web sitenizin teknik sağlığı, arama motorlarındaki görünürlüğünüzün en temel belirle...